Ok, I´m gonna make a new thread for this, as I´m a bit past “very first impressions” and gave it some more playtime…

I think you should do an introduction to painting with Instamat, its not as intuitive as I´d like it to be.

I might be wrong, but it doesn´t seem like its your main focus, which is more centered on the procedural creation of materials.

Which is completely fine and probably more useful in terms of mass-creation-pipelines, than individual texturing.

I´ve also played around with adding graphs as effects to layers and its really cool: Painting a simple mask with some brush strokes and then transforming it with blur and erosion like filters can quickly create some really cool handpainted effects for example.

But I´m still not getting some of the very basics, or they just need a proper tutorial to guide newcomers through the process.

So, apologies if I assume stuff isn´t there already that actually is or that I just did it wrong, still a good reason to provide us with a painter-centric tutorial…![]()

Here are a couple of things I´ve been struggling with:

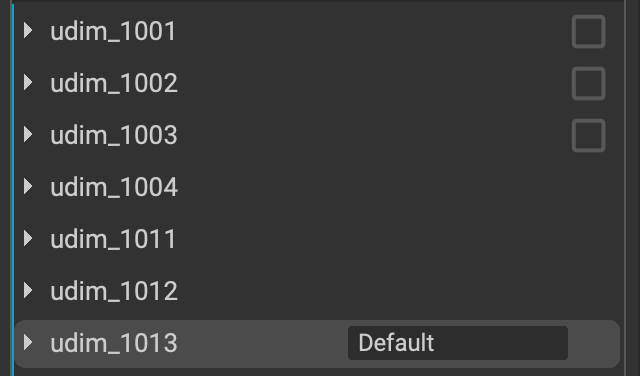

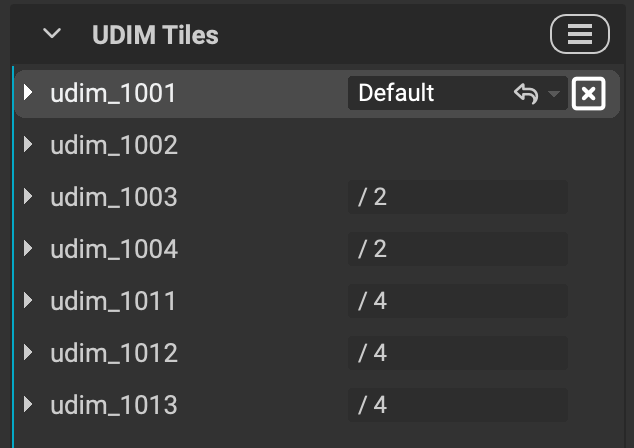

- I can´t make udims work.

Also couldn´t find the info in the manual.

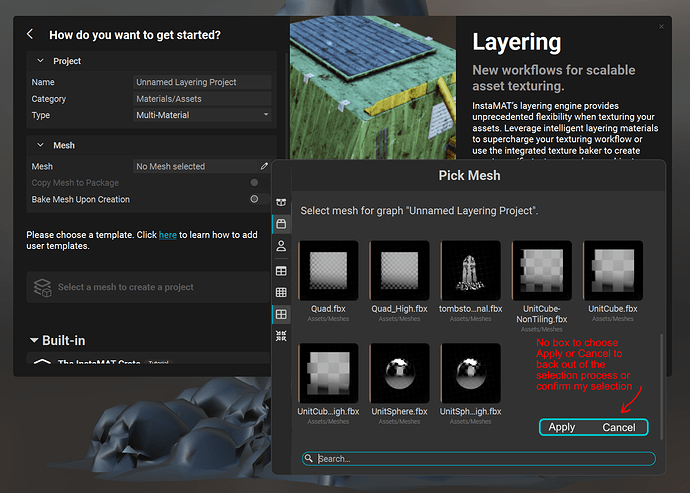

I chose “UDIM” after creating a new “layering” project.

Picked my udim fbx file, then added all the udim images.

The mesh shows up correctly in the package with all udims and you can see at the beginning how the udims are separately filled with blue.

But then when I try to assign the first udim, all the udims get THAT image assigned, instead of automatically getting the correct udim assigned. Also can´t drag all of them at once.

I´ve tried renaming the suffix from “_1001” to “.1001” but that didn´t make a difference: Whatever tile I pick gets mapped correctly, but none of the others show up and it simply gets projected onto ALL tiles.

There is also a selection at the top of the layer stack, where you can directly select the udim, but I couldn´t figure out what its for.

At first I thought it might be an issue with the metahuman mesh I was using, but I tried with another UDIM mesh I knew was working, since I used in already in some renders and I had the same issues.

I then also baked the mesh data, hoping that those maps would show up properly or that I could figure out what exact suffix I´d have to use, but those were also not mapped correctly.

I CAN however also paint across all tiles. - Brushes

This is a bit more extensive in terms of feedback I have:

A) Brush palette

I know you can probably do powerful things with brushes already, by utilizing graphs etc.

I´m used to workflows though, where I can quickly pick brushes from a palette and I´m missing that option here. A dedicated panel to pick brushes or a simpler way to navigate would be greatly appreciated.

B) Took me a while to find the pen dynamics settings. While its great to have granular control over what and how much pen pressure affects painting, I´d prefer aan additional buttom simply to enable/disable pen pressure. Also I don´t know if you can save these as default settings for a brush.

Like, I wanna have a default soft round brush, that simply reacts to size and opacity using pen pressure and I don´t always wanna change that per project.

C) Speaking of defaults and saving brushes: I couldn´t yet figure out how to save my own custom brushes.

If you wanna use instamat mainly for manually texture painting, this is pretty essential.

D) Brush dragging.

Both Zbrush and Blender have the option to drag a brush with alpha. I couldn´t find that option. Having decals is great, but its a different workflow, for quickyl painting a bunch of veins for example, I´d love to just drag and scale/rotate it with a brush.

E) Color variation.

It would be quite useful to also have the option to use color variation in the brush settings.

Like, either using a gradient (mapping it to black and white values on an alpha can be very useful) or or random hue/value variation.

F) Quick color switcher

As I´ve mentioned before: Having a simple Background/foreground color switcher can also make painting a lot easier.

G) Brush/Erase

For masking its enough to just able to switch between painting and masking. That should be doable using B and E, but for me those shortcuts don´t work rn.

I can switch to E, but then can´t switch back to B.

I also couldn´t find these shortcuts in the preferences, so I might reassign them

F) Alpha/Texture vignette.

I couldn´t find the option to quickly apply a radial mask to vignette a rectangular alpha for example.

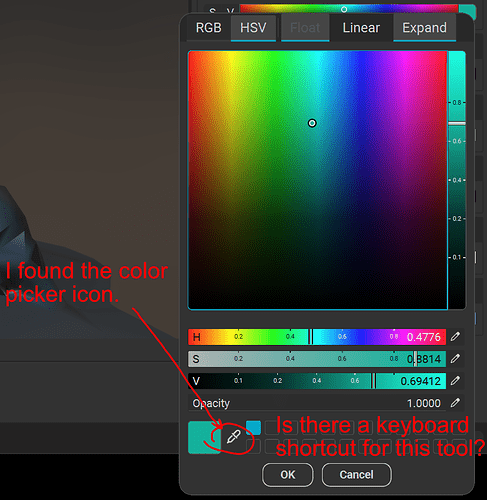

G) Shortcuts/Preferences

It seems like a lot of shortcut actions are still missing for painting.

Also I was missing the option to pick a tablet api (are you using wintab by default?), which is useful in some cases.

- Working with meshes

I mentioned this in my previous post:

Having some more options when working with imported fbx meshes would be very useful as well.

Stuff like:

A) If you have a mesh consisting of several parts, but you exported it as ONE mesh:

Would be great if you could pick which mesh to paint on within that fbx.

B) Being able to add objects to a layering project.

For example: After I cleaned the metahuman to only consist of the merged mesh for body/head, I saved the eyes/mouth/Teeth in separate files. But of course it would be nice to texture all of them in context to each other.

C) Being able to hide parts of the mesh while painting.

Using some lasso selection or picking parts of a mesh by feature (connected polys or material IDs) to be able to hide parts of a mesh while painting would be super useful.

- Projection painting.

I noticed a button for projection painting in the viewport for the layering project, but I couldn´t figure out what it does or how it works.

I hope it works similar like Zbrushs “Spotlight” feature:

You load in an image that overlays the mesh you wanna paint and then you can paint project it from different angles.

Is that it and if so how exactly do I utilize this? - UV manipulation

A) I think if you offer a layering template that is meant for texturing, you may wanna consider making “UV” projection mode the default, instead of triplanar. Or even better. Make it “sensitive”: If the mesh you´re adding HAS uvs: Use UV projection. If it doesn´t: Use triplanar.

Thats also something that threw me off a few times, as my textures seemed to be mapped completely wrong, when it was just set to triplanar.

B) I WAS also looking for an option to flip textures and couldn´t find that. Because Zbrush for examples can flip textures the wrong way and then it would be great if I could correct that directly in instamat, instead of having to reexport them first. - Performance

First of all:

I was a bit confused at first about the texture resolution I was working with.

Only later did I understand, that the texture resolution I pick in the viewport is NOT my final resolution, its merely the preview resolution.

Which is great, because the performance on my mesh with 11 UDIM tiles in 4k was NOT that great.

Its rather unworkable to be honest.

Even on my rtx3090 workstation with 64gb ram, just a simple brush stroke with just ONE layer active was really laggy.

Being able to work on 2k or even 1k for the basic texturing gives pretty good performance, but I´m not sure how to work with it when it comes to the high fidelity details.

Is there maybe a way for example to completely hide tiles I´m not working on, so I can work on the 4k tiles one by one, or just with the adjacent tile, if I need to paint across them? - What exactly is the unified material setup?

I´m still a it uncertain about what it does, compared to the material layering or the udim approach:

Lets stick to my metahuman example: As the UVs are layed out as udims by default, instead of all in the uv 0-1 range, I HAVE to use udim anyways.

But if I´d instead had one mesh and separate material IDS for the body and head for example, and the uvs where then layed out in the 0-1 range, but for different material ids:

Would the unified material layer approach then let me paint across the seams, if I would create a material for the head and another one for the body? - SSS

Is it possible to preview subsurface scatterig in the viewport? I know you can add subsurface channels, but it didn´t look like subsurface, when painting on it.

Thats it for now, hope you can provide a quick answer for the udim issue at least, as that is mostly preventing me from diving deeper into the workflow and figuring out how to use it for what I´m working on as a personal project right now.

I hope after my current deadlines I´ll have some more time to evaluate the procedural material creation part as well and how we could use that at my work for texturing assets in a more automated way.

Because I already tried mesh baking and thats pretty fast on gpu and as far as I understood, I could use naming conventions to mass bake out whole folders with assets (maybe including autouving?) this way and then run them through a procedural material graph to bake in some more complex noise textures and then get them all sent out to unreal, where we can then use the baked information for procedural shading. Because right now we´re completely relying on triplanar mapping in Unreal and that has its limits, when it comes to deforming meshes.