Ability to use advanced GUI controls in custom nodes, like ones found in the Transform or Mesh Render Depth nodes. Currently, those UIs appear to be built-in and non-accessible to users in their own nodes.

Justification

The ability to add GUI functionality like those found in the above nodes to our own custom nodes would be a significant UX improvement for anyone wanting to develop custom nodes, which I reckon is a large percentage of the demographic.

Implementation Details

The user must expose the correct data types to satisfy the required data that will feed into the GUI elements. Substance allows for functionality like this, I think it would go a long way for UX if artists could simplify editing data with more tactile GUI elements.

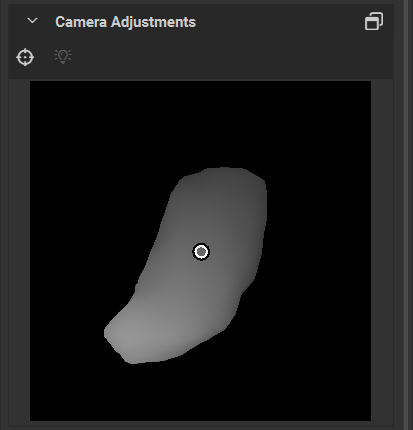

EX.1 By exposing a Vec2/Vec3 parameter, the user will have access to a handle GUI that will allow them to perform tasks such as rotating the camera orientation of the Mesh Render nodes or placing a shape somewhere on a 2D texture.

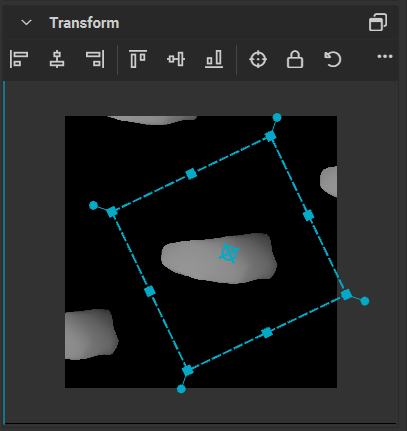

EX.2 A user would have to expose an appropriate Matrix parameter, or perhaps a series of Vectors that would comprise a Matrix. This could provide them with a Transform GUI where users could tweak these values more intuitively inside the new GUI, rather than sliders.